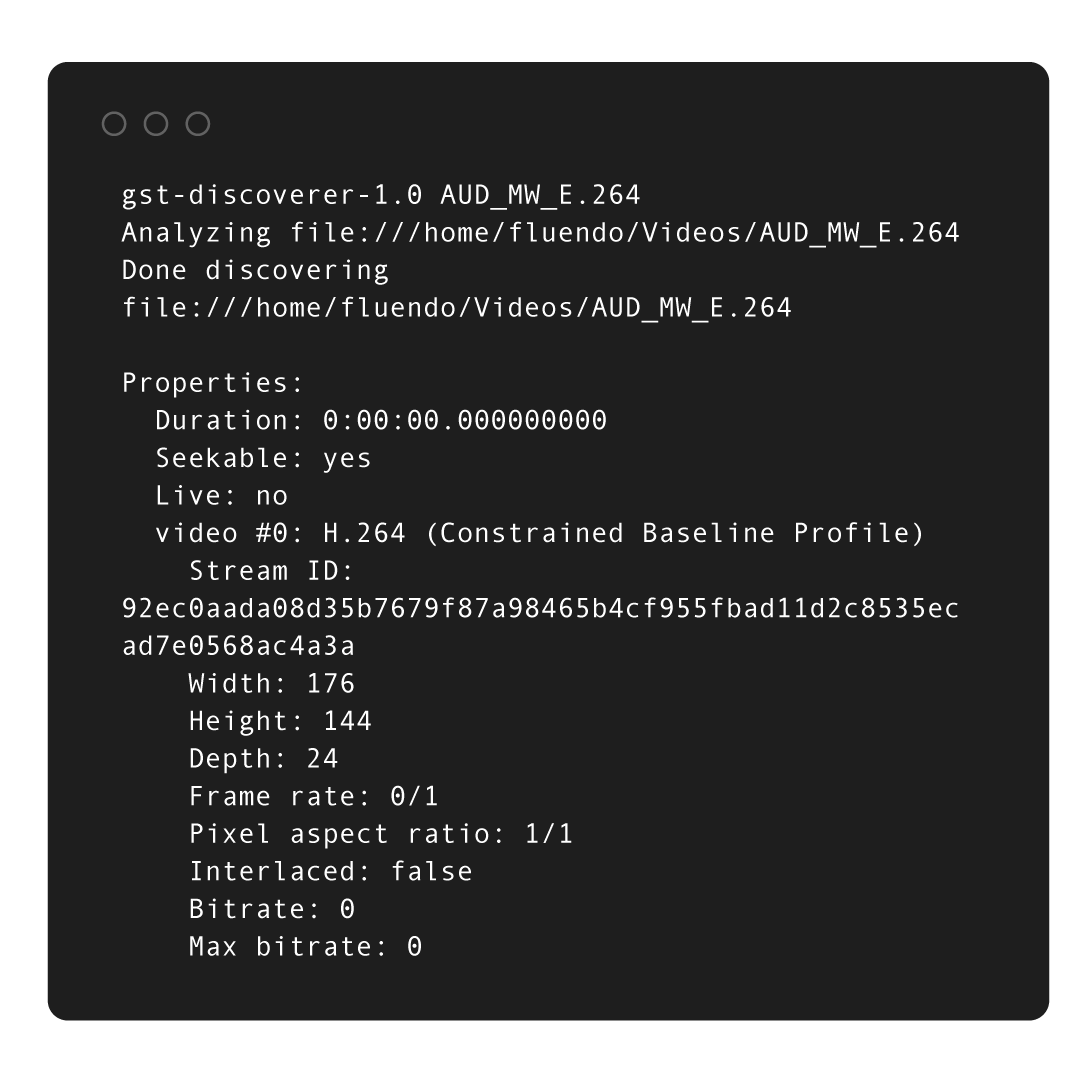

Gst-Audit: The instrumentation tool for your pipelines

gstreamer, open-source, events, beaverThis blog post provides an overview of the Gst-Audit open source project, how it was born, and the technical challenges on building it

At Fluendo Lab, we’re advancing technologies for digital experiences. We work on video processing, interactive content creation, and audio enhancement. Our research covers AI-based computer vision, next-generation codecs, and multimedia frameworks for edge devices. We dialogue with stakeholders and aim to transform how people enjoy, communicate, and work with digital content. Join us as we redefine the boundaries of digital media experiences.

The heart of the Lab’s work is to create innovative multimedia technologies that allow for more seamless communication, collaboration, and enjoyment.

At Fluendo Lab, we value open dialogue and collaboration with our team and beyond. We welcome insights and ideas from our stakeholders, such as users, customers, and the tech community. They help shape the future of multimedia technology with their experiences, needs, and ideas. They guide our research and ensure our solutions are user-focused and effective.

The heart of the Lab’s work is to create innovative multimedia technologies that allow for more seamless communication, collaboration, and enjoyment.

At Fluendo Lab, we value open dialogue and collaboration with our team and beyond. We welcome insights and ideas from our stakeholders, such as users, customers, and the tech community. They help shape the future of multimedia technology with their experiences, needs, and ideas. They guide our research and ensure our solutions are user-focused and effective.

We are using AI-based computer vision to enhance user experiences. This research line is about expanding the possibilities of multimedia, enabling users to interact with digital content in new and exciting ways.

The focus is on improving the encoding and decoding processes to deliver better quality at lower bitrates and computational requirements. This is our commitment to providing high-quality multimedia experiences, even in resource-limited scenarios, and evolving with our partner’s needs.

We’re innovating in robust multimedia frameworks for edge devices. Our focus is on performance and efficiency, enabling seamless multimedia experiences on devices with limited resources.

We are using AI-based computer vision to enhance user experiences. This research line is about expanding the possibilities of multimedia, enabling users to interact with digital content in new and exciting ways.

The focus is on improving the encoding and decoding processes to deliver better quality at lower bitrates and computational requirements. This is our commitment to providing high-quality multimedia experiences, even in resource-limited scenarios, and evolving with our partner’s needs.

We’re innovating in robust multimedia frameworks for edge devices. Our focus is on performance and efficiency, enabling seamless multimedia experiences on devices with limited resources.

In-house developed multi platform SDK to speed up our research to find new solutions for common problems.

Our adeptness at hardware acceleration opens up possibilities for tackling novel use cases.

All those tools are thought as optional parts in a bigger architecture so we can create an optimal toolset for each problem.

We’ve distilled our expertise in multimedia technologies into a tool, designed to substantially expedite the process of new developments.

Merging off-the-shelf and custom models we are able to reduce our time to market while keeping state-of-the-art performance.

In-house developed multi platform SDK to speed up our research to find new solutions for common problems.

Our adeptness at hardware acceleration opens up possibilities for tackling novel use cases.

All those tools are thought as optional parts in a bigger architecture so we can create an optimal toolset for each problem.

We’ve distilled our expertise in multimedia technologies into a tool, designed to substantially expedite the process of new developments.

Merging off-the-shelf and custom models we are able to reduce our time to market while keeping state-of-the-art performance.

Lynx is building a codec with technologies like LCEVC, screen content coding tools, and AI-based content-aware encoding for great performance at lower costs. Lynx is also making power-efficient codecs that reduce CO2 emissions from video processing.

We aim to drastically improve speed performance for VOD transcoding parallelizing with one GPU (multiple cores) and/or several GPUs together.

We have developed a multi platform AI inference SDK. It supports C++, C#, and Python and works with embedded boards. It supports GPU-accelerated AI pipeline execution. Its APIs enable detection, segmentation, and much more.

Bits & Bytes

Explore our blog, one byte at a time. Our team unpack our latest news, industry insights and in-depth articles to connect you with the multimedia world.This blog post provides an overview of the Gst-Audit open source project, how it was born, and the technical challenges on building it

Watch Fluendo's technical talks from the GStreamer Conference 2025 in London.

Redefining real-time AI inference with Raven's GPU-driven architecture.

Efficient bounding box post-processing using GPU-native NMS-Raster.