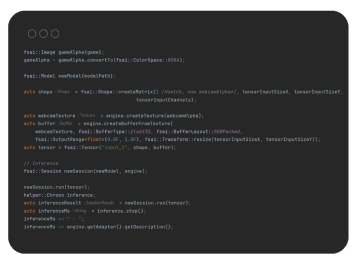

Raven: A 100% GPU-driven AI inference framework for real-time video and graphics

ai, multimedia-edge-ai, fluendo-ai-plugins, ravenRedefining real-time AI inference with Raven's GPU-driven architecture.

Driven by our Raven AI Engine, these AI-powered plugins offer production-ready solutions optimized for Edge AI, delivering cross-platform compatibility across a wide range of devices, from desktop PCs to embedded systems.

Seamless workflow integration

Easily integrate advanced AI features into existing GStreamer workflows without requiring extensive reconfiguration or disrupting established processes, enabling users to enhance their multimedia content effortlessly.

Optimized Performance for Edge Devices

Run AI-powered plugins efficiently on-device, reducing latency and maximizing resource utilization for applications requiring real-time performance in resource-constrained environments—such as IoT, autonomous systems, or offline processing.

Hardware-agnostic deployment

Deploy your models across diverse hardware environments without worrying about specific CPU, GPU, or NPU configurations. Our software ensures consistent, optimal runtime performance across multiple vendors (NVIDIA, AMD, Intel, and more).

Seamless workflow integration

Easily integrate advanced AI features into existing GStreamer workflows without requiring extensive reconfiguration or disrupting established processes, enabling users to enhance their multimedia content effortlessly.

Optimized Performance for Edge Devices

Run AI-powered plugins efficiently on-device, reducing latency and maximizing resource utilization for applications requiring real-time performance in resource-constrained environments—such as IoT, autonomous systems, or offline processing.

Hardware-agnostic deployment

Deploy your models across diverse hardware environments without worrying about specific CPU, GPU, or NPU configurations. Our software ensures consistent, optimal runtime performance across multiple vendors (NVIDIA, AMD, Intel, and more).

Delivers real-time background subtraction from live video feeds, seamlessly integrating the processed stream into virtual environments or desktop presentations. It is the ideal solution for video conferencing, live streaming platforms, and professional content creation.

Delivers real-time background subtraction from live video feeds, seamlessly integrating the processed stream into virtual environments or desktop presentations. It is the ideal solution for video conferencing, live streaming platforms, and professional content creation.

Applies advanced multi-target detection and tracking algorithms to dynamically blur sensitive objects or faces, ensuring strict data privacy and regulatory compliance. This capability is highly valuable for video surveillance, public safety, and video-sharing platforms. Read more about our Anonymizer.

Applies advanced multi-target detection and tracking algorithms to dynamically blur sensitive objects or faces, ensuring strict data privacy and regulatory compliance. This capability is highly valuable for video surveillance, public safety, and video-sharing platforms. Read more about our Anonymizer.

This plugin employs a Generative Adversarial Network (GAN) to upscale video and image resolutions by 4x, enhancing the quality of low-resolution content. This is crucial for media restoration, video streaming, and any image clarity applications.

This plugin employs a Generative Adversarial Network (GAN) to upscale video and image resolutions by 4x, enhancing the quality of low-resolution content. This is crucial for media restoration, video streaming, and any image clarity applications.

Each plugin comes pre-configured to handle AI tasks, requiring no AI expertise. The plugins built on Fluendo’s proprietary Raven AI Engine offer seamless integration with GStreamer.

Our system delivers high-performance solutions that work efficiently, even on low-power edge devices. Fluendo AI Plugins empower businesses to transform their video content workflows by improving processing speed, accuracy, and quality across a range of applications.

Each plugin comes pre-configured to handle AI tasks, requiring no AI expertise. The plugins built on Fluendo’s proprietary Raven AI Engine offer seamless integration with GStreamer.

Our system delivers high-performance solutions that work efficiently, even on low-power edge devices. Fluendo AI Plugins empower businesses to transform their video content workflows by improving processing speed, accuracy, and quality across a range of applications.

PLUGINS

Our standalone AI plugins are designed to work independently or as part of a larger video processing pipeline, giving you the flexibility to integrate only the features your project requires.

Bits & Bytes

Explore our blog, one byte at a time. Our team unpack our latest news, industry insights and in-depth articles to connect you with the multimedia world.Blog

Read more about our work

Raven: A 100% GPU-driven AI inference framework for real-time video and graphics

ai, multimedia-edge-ai, fluendo-ai-plugins, ravenRedefining real-time AI inference with Raven's GPU-driven architecture.

NMS-Raster: Post-processing bounding boxes using the “G” in GPU

sports, multimedia-edge-ai, gstreamer, fluendo-ai-plugins, ravenEfficient bounding box post-processing using GPU-native NMS-Raster.

Fluendo AI Plugins v1.0.4: The power of real-time AI anonymization

fluendo-ai-plugins, anonymizerReal-time AI video anonymization for 4K high-resolution content.

Real-time 4K face anonymization benchmark (GStreamer plugin)

broadcasting, video-surveillance, automotive, multimedia-edge-ai, gstreamer, events, fluendo-ai-plugins, anonymizer, ravenPerformance benchmark analysis of real-time 4K AI video anonymization.