Fluendo and Spyrosoft BSG partner to develop AI-Powered solutions for sports video analysis and broadcast workflows

broadcasting, sports, multimedia-edge-ai, announcementsFluendo and Spyrosoft BSG partner for AI sports video analysis.

After more than ten years of experience developing sports video analysis software, we empower you to collect, analyze, visualize, and truly understand your sports data like never before.

Use the latest technologies and state-of-the-art machine learning algorithms to extract the most valuable information from a video.

Streamline your video analysis process by automating tasks that require countless hours of manual effort. Optimize your workflow, save time, and focus on what matters most: making data-driven decisions that drive results.

Use the latest technologies and state-of-the-art machine learning algorithms to extract the most valuable information from a video.

Streamline your video analysis process by automating tasks that require countless hours of manual effort. Optimize your workflow, save time, and focus on what matters most: making data-driven decisions that drive results.

Take full advantage of our Multimedia Edge AI tools and products designed to transform your company’s video analysis and decision-making processes.

Take powerful insights with precise video analysis, leveraging AI-powered vision technology.

Seamlessly edit and process videos with efficiency and flexibility.

Improve your reliability by having proper validations systems that can emulate your application as a whole with long running and regression tests.

Our solutions are tailored for multiple sports, utilizing customized models and algorithms for each discipline to extract accurate, sport-specific data and actionable insights.

Take powerful insights with precise video analysis, leveraging AI-powered vision technology.

Seamlessly edit and process videos with efficiency and flexibility.

Improve your reliability by having proper validations systems that can emulate your application as a whole with long running and regression tests.

Our solutions are tailored for multiple sports, utilizing customized models and algorithms for each discipline to extract accurate, sport-specific data and actionable insights.

our case studies

Developments that bring real-world results, these case studies show how our solutions help your business achieve goals and enhance user experiences.

A client in the sports technology sector, operating across football, rugby, and hockey, required a real-time sports video analysis solution capable of running multiple AI models in parallel for tasks such as object detection, player tracking, and camera calibration. The system needed to deliver high-performance processing and improved accuracy while maintaining low latency.

A complete sports video analysis application was developed, integrating multiple AI models and functionalities across various domains, including object detection, multi-object tracking, and camera calibration. Model accuracy was improved through synthetic data generation, enabling robust performance in a wide range of sports scenarios.

The system featured a hardware-accelerated graphics environment tailored to the customer’s requirements, along with a custom, high-performance video player capable of reproducing synthetic video streams. All AI models were optimized for real-time execution on edge devices, providing a responsive and field-ready sports technology solution.

our use cases

These use cases present conceptual examples of how our ideas and technologies could address real-world industry challenges.

Historic sports footage represents a valuable asset for broadcasters, clubs, sports federations, and media archives. However, many classic matches were recorded in low resolutions, analog formats, or early digital standards, resulting in blurry visuals, limited detail, and reduced viewing quality on modern displays.

Manually restoring and enhancing these recordings is complex, time-consuming, and often requires specialized post-production workflows. As demand grows for remastered sports content, documentaries, digital archives, and modern streaming platforms, improving the quality of historic footage has become increasingly important.

With advancements in AI-based superresolution, computer vision models can reconstruct missing details and upscale legacy sports videos to higher resolutions. By processing video frames automatically, such systems can transform historic recordings into clearer, sharper versions suitable for modern screens while preserving the authenticity of the original footage.

Historic sports footage can be enhanced using deep learning superresolution models that reconstruct high-frequency details from low-resolution recordings. By analyzing spatial patterns in each frame, these models generate sharper textures, clearer player silhouettes, and more defined field lines, significantly improving the visual quality of archived matches.

This capability can be integrated into video processing pipelines using the superresolution component of Fluendo AI Plugins (FAIP), built on top of Fluendo’s AI infrastructure. The component processes video frames and produces upscaled outputs (e.g., ×3 or ×4 resolution), enabling sports organizations to restore legacy footage and adapt it for modern displays and distribution platforms.

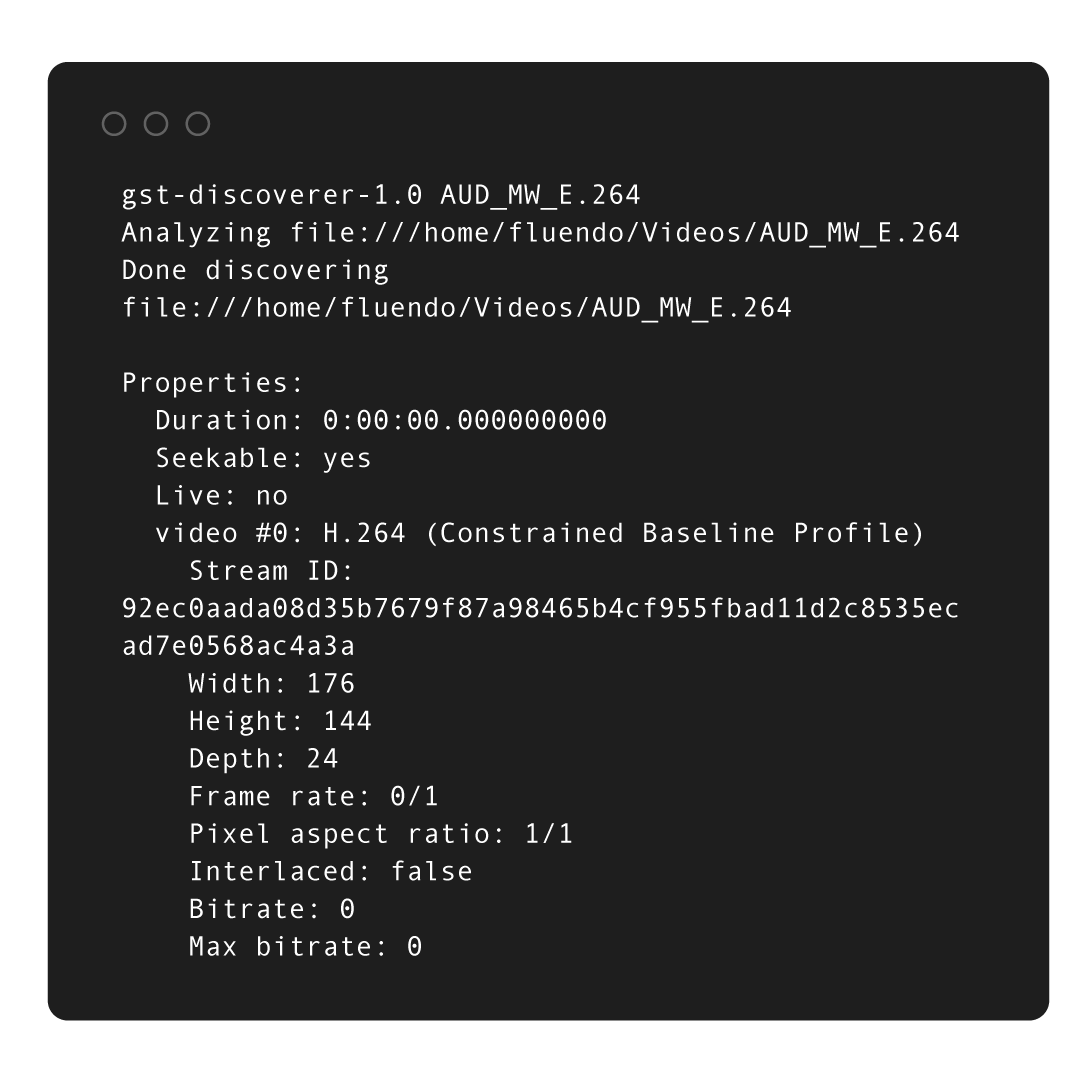

This superresolution solution is designed for seamless integration and works with standard video files, live streams, or broadcast archives using a GStreamer pipeline.

Our achievements

Transforms low-quality historic match recordings into high-resolution footage, enabling broadcasters and sports organizations to reuse valuable archive content for modern platforms.

Automates the enhancement of legacy sports footage, allowing organizations to upscale and restore large video collections efficiently without complex manual post-production workflows.

Enhances historic matches with greater visual clarity, enabling clubs, broadcasters, and media companies to create engaging documentaries, highlights, and digital experiences.

Transforms low-quality historic match recordings into high-resolution footage, enabling broadcasters and sports organizations to reuse valuable archive content for modern platforms.

Automates the enhancement of legacy sports footage, allowing organizations to upscale and restore large video collections efficiently without complex manual post-production workflows.

Enhances historic matches with greater visual clarity, enabling clubs, broadcasters, and media companies to create engaging documentaries, highlights, and digital experiences.

Youth and academy sports organizations increasingly record matches and training sessions for performance analysis, coaching review, and player development. However, these recordings often include minors, spectators, and staff members, creating important privacy and regulatory challenges, particularly under frameworks such as GDPR and child protection policies.

Manually anonymizing individuals in sports footage is time-consuming and difficult to scale, especially when clubs, academies, or federations manage large volumes of recorded matches, training sessions, and archived content.

With advances in AI-based person detection, tracking, and segmentation, computer vision systems can automatically detect individuals appearing in sports video and apply anonymization techniques such as face blurring or body masking. At the same time, the system can preserve useful movement and positional metadata, enabling coaches and analysts to study gameplay, tactics, and player behavior without exposing personal identities.

Our solution uses deep learning models to detect and segment individuals appearing in sports video recordings. This includes not only players on the field, but also coaches, referees, staff members, and spectators who may appear in the footage. Once detected, the system applies privacy-preserving transformations such as face blurring, pixelation, or full-body masking to ensure that individuals cannot be identified.

Video streams are processed through an AI pipeline integrated directly into the sports video workflow. The system analyzes each frame to identify people present in the scene and automatically applies anonymization techniques while preserving the overall visual context of the match or training session.

In addition to anonymization, the system can extract structured metadata describing the scene, such as the presence and positioning of individuals on the field or in surrounding areas. This allows sports organizations to maintain useful analytical capabilities for coaching review, performance analysis, or content management, while ensuring that the identities of players, staff, and spectators remain protected.

By combining automated anonymization with metadata generation, sports organizations can safely store, analyze, and share youth and academy sports recordings without exposing personal identities.

Our achievements

Automatically anonymizes players, staff, and spectators appearing in sports recordings, helping clubs and academies comply with privacy regulations while safely storing and sharing video content.

Preserves movement and scene metadata while anonymizing individuals, allowing coaches and analysts to review matches and training sessions without exposing personal identities.

Automates the anonymization of sports recordings, enabling clubs, academies, and federations to manage large volumes of video while meeting privacy and data protection requirements.

Automatically anonymizes players, staff, and spectators appearing in sports recordings, helping clubs and academies comply with privacy regulations while safely storing and sharing video content.

Preserves movement and scene metadata while anonymizing individuals, allowing coaches and analysts to review matches and training sessions without exposing personal identities.

Automates the anonymization of sports recordings, enabling clubs, academies, and federations to manage large volumes of video while meeting privacy and data protection requirements.

Sports clubs and academies increasingly produce live video streams, interviews, commentary shows, and behind-the-scenes content for digital platforms and social media. These broadcasts often take place in training grounds, stadiums, or mixed media areas, where children, staff members, and spectators may appear in the background.

This creates important privacy and safeguarding challenges, particularly in youth sports environments where minors must not be publicly identifiable without explicit consent.

Manual editing or post-production anonymization is not feasible for live broadcasts or real-time streaming, where video must be processed instantly before distribution.

With advances in AI-based person and face detection, video processing systems can automatically identify individuals appearing in the background of a live stream and apply anonymization techniques such as face blurring or masking in real time. This allows sports organizations to safely broadcast interviews, live shows, and training content while protecting the identity of children and other individuals present in the scene.

Our solution uses deep learning models to detect individuals appearing in live video streams, including players, staff members, spectators, and children present in the background during interviews, commentary segments, or live coverage.

Each video frame is analyzed in real time to identify visible faces or people in the scene. Once detected, the system automatically applies privacy-preserving transformations such as face blurring, pixelation, or masking, ensuring that individuals cannot be identified while preserving the visual integrity of the broadcast.

The system is designed to operate within high-performance live production environments, supporting video resolutions up to 4K and 8K, and high frame rates including 60 fps and 120 fps. Thanks to an ultra-optimized multimedia AI pipeline, anonymization can be performed with very low latency, ensuring that the broadcast workflow remains uninterrupted.

The solution integrates directly into professional streaming and broadcast pipelines, enabling anonymization to occur before encoding or distribution to streaming platforms. Processing can be deployed on edge AI devices located in stadiums or production units, or in cloud-based infrastructures, depending on the production workflow.

By combining optimized AI inference with high-performance video processing, sports organizations can safely produce interviews, analyst shows, live streams, and behind-the-scenes content while protecting the identities of children, spectators, and staff—even in high-resolution, high-frame-rate live productions running on compact edge hardware.

Our achievements

Automatically anonymizes individuals appearing in the background of interviews and live streams, helping sports clubs safeguard minors and respect privacy requirements.

Applies anonymization directly within the live video pipeline, allowing clubs to broadcast interviews, commentary shows, and behind-the-scenes content without manual editing.

Processes live video streams with minimal latency, enabling anonymization to run seamlessly within existing sports production and streaming infrastructures.

Automatically anonymizes individuals appearing in the background of interviews and live streams, helping sports clubs safeguard minors and respect privacy requirements.

Applies anonymization directly within the live video pipeline, allowing clubs to broadcast interviews, commentary shows, and behind-the-scenes content without manual editing.

Processes live video streams with minimal latency, enabling anonymization to run seamlessly within existing sports production and streaming infrastructures.

Sports organizations generate and store vast amounts of video recordings, match reports, scouting notes, press releases, and analytical documents. Over time, these collections grow into extensive archives that are difficult to navigate, especially when metadata is incomplete or inconsistently structured.

Traditional search systems rely on manual tagging and keyword-based queries, which often fail to capture the contextual meaning of the information stored in these archives. As a result, valuable knowledge remains difficult to retrieve, slowing down research, media production, and editorial workflows.

Recent advances in Large Language Models (LLMs) and semantic search systems enable a new way to explore large multimedia and document collections. Instead of relying on keywords, users can query archives using natural language questions and retrieve results based on semantic relevance.

By combining AI-powered document understanding, vector search, and contextual reasoning, sports organizations can explore their archives more efficiently, accelerate research workflows, and unlock valuable insights across historical content.

Our solution uses Large Language Models (LLMs) combined with semantic vector databases to enable advanced exploration of sports media and document archives. Video transcripts, match reports, scouting notes, and editorial documents are processed using AI models that extract meaningful textual representations and convert them into semantic embeddings.

These embeddings are stored in a vector database that allows users to perform natural language queries instead of relying on rigid keyword searches. The system identifies semantically related content across large archives and retrieves relevant documents, clips, or reports even when exact keywords are not present.

On top of the semantic retrieval layer, LLMs can analyze the retrieved information and generate structured outputs such as summaries, contextual explanations, or draft editorial content. This allows journalists, analysts, and club staff to quickly explore historical information, understand trends, and produce content based on large volumes of archived material.

By integrating semantic search with modern multimedia infrastructures, sports organizations can transform fragmented document collections into navigable knowledge bases, enabling faster research, improved storytelling, and more efficient use of historical sports data.

Our achievements

Enables natural language exploration of large collections of match reports, transcripts, and editorial documents, making it easier to discover relevant information across historical sports data.

Uses AI-powered retrieval and summarization to help analysts, journalists, and club staff quickly gather insights and produce content from extensive document archives.

Combines semantic indexing and LLM reasoning to organize large collections of sports documents and media into an intelligent system that supports advanced queries and contextual exploration.

Enables natural language exploration of large collections of match reports, transcripts, and editorial documents, making it easier to discover relevant information across historical sports data.

Uses AI-powered retrieval and summarization to help analysts, journalists, and club staff quickly gather insights and produce content from extensive document archives.

Combines semantic indexing and LLM reasoning to organize large collections of sports documents and media into an intelligent system that supports advanced queries and contextual exploration.

Sports clubs, broadcasters, and analytics teams increasingly rely on data-driven insights to understand player performance, tactical behavior, and match dynamics. Traditionally, these metrics are collected using dedicated tracking systems or manual annotation workflows, which can be costly, complex to deploy, and difficult to integrate into existing video infrastructures.

Recent advances in AI-based computer vision enable sports analytics to be derived directly from video. By analyzing match footage frame by frame, AI models can estimate player positioning, movement trajectories, and spatial relationships across the field.

These insights can be transformed into valuable performance metrics such as distance traveled, top speed, positioning patterns, and heatmaps, enabling coaches, analysts, and media teams to better understand player behavior and team dynamics.

When combined with modern multimedia pipelines, these AI-generated insights can be embedded directly into the video stream as structured metadata, enabling downstream systems to access analytics data in real time without requiring separate data pipelines.

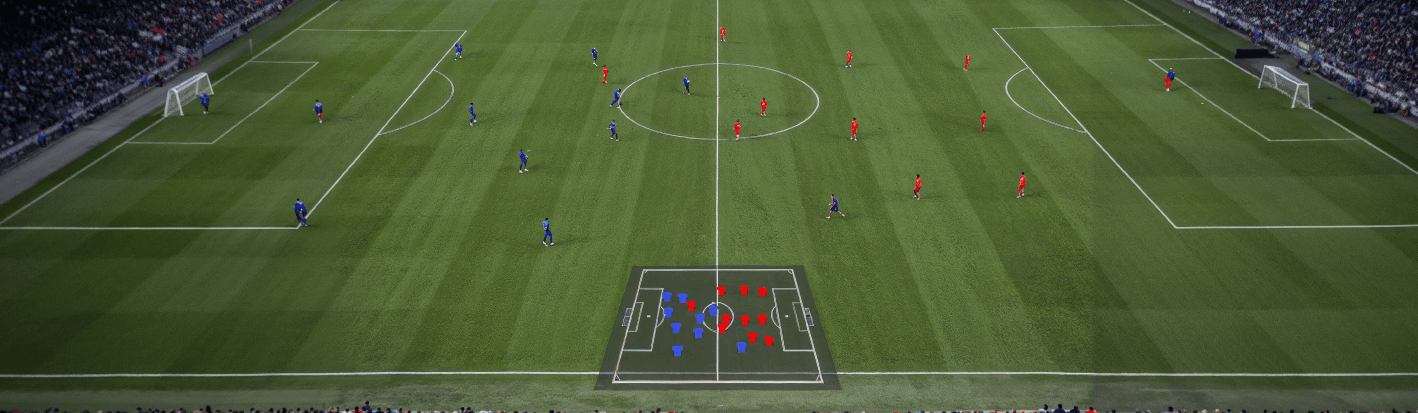

Our solution applies deep learning models to analyze sports video and extract structured information about players and their movements on the field. The AI pipeline processes each frame to identify athletes, estimate their spatial positions, and derive time-varying motion-related metrics.

From this visual analysis, the system can generate a variety of performance indicators, including player positioning, distance travelled, movement intensity, speed estimation, and spatial heatmaps. These metrics provide valuable insights for performance analysis, coaching review, and tactical evaluation.

In addition to player detection, the system can also generate precise segmentation masks of athletes, enabling advanced visual effects and broadcast graphics. These masks allow production teams to create layered overlays such as tactical visualizations, sponsor graphics, or dynamic highlights while maintaining a clear separation between players and the background scene.

All generated insights can be packaged as structured metadata and embedded directly into the video stream using multimedia container formats and metadata channels supported by the video pipeline. By integrating this process into a GStreamer-based workflow, the system enables AI-generated sports analytics to travel alongside the video content itself.

This approach allows downstream systems—including broadcast graphics engines, analytics dashboards, and archive platforms—to access performance data directly from the video stream, simplifying integration and enabling real-time data-driven sports productions.

Our achievements

Uses AI to derive player positioning, movement patterns, and performance metrics directly from match footage, eliminating the need for specialized tracking hardware.

Generates player segmentation masks and spatial data that allow broadcasters to create dynamic overlays, tactical visualizations, and enhanced viewing experiences.

Packages AI-generated metrics as structured metadata within the video pipeline, enabling analytics data to travel alongside the video for seamless integration with broadcast, analysis, and archival systems.

Uses AI to derive player positioning, movement patterns, and performance metrics directly from match footage, eliminating the need for specialized tracking hardware.

Generates player segmentation masks and spatial data that allow broadcasters to create dynamic overlays, tactical visualizations, and enhanced viewing experiences.

Packages AI-generated metrics as structured metadata within the video pipeline, enabling analytics data to travel alongside the video for seamless integration with broadcast, analysis, and archival systems.

Bits & Bytes

Explore our blog, one byte at a time. Our team unpack our latest news, industry insights and in-depth articles to connect you with the multimedia world.Fluendo and Spyrosoft BSG partner for AI sports video analysis.

Efficient bounding box post-processing using GPU-native NMS-Raster.

Extracting tactical soccer metrics from broadcast video with real-time AI.

AI-powered 4x video upscaling with Fluendo's GStreamer plugin.