Beyond vibe coding: Scaling AI software architecture with spec-driven development

Written by

Oriol GarciaApril 27, 2026

During the development of IA·Veu, we confirmed a harsh industry reality: AI coding assistants make developers fast, but they also make large codebases fragile.

Most of this fragility stems from what the industry now calls “Vibe Coding”: a development style in which engineers rely on high-level, conversational prompts and “vibes” to generate code rather than strict technical requirements. While this is highly effective in closed scenarios, scaling it to a long-term project eventually hits a wall:

- Hallucinations: The AI generates plausible but logically incorrect code.

- Context loss: As the codebase grows, the “vibe” becomes too complex for the LLM to track.

- Architectural drift: Small, isolated AI decisions gradually pull the project away from its core design.

We realized that the bottleneck wasn’t the quality of our individual prompts. Instead, we needed to move away from the “vibe” and establish a deterministic framework: a process that bounded the scenario every time and provided persistent context across the entire lifecycle.

%%{init: {'theme': 'neutral'}}%%

flowchart LR

subgraph VC["Vibe Coding"]

direction TB

v1([Prompt LLM]) --> v2[Generate Code]

v2 --> v3{Works?}

v3 -->|No — paste error back| v1

v3 -->|Yes| v4([Ship])

end

subgraph SDD["Spec-Driven Development"]

direction TB

s1([constitution.md]) --> s2[spec.md]

s2 --> s3{{👤 Human Review}}

s3 -->|Approved| s4[plan.md + tasks.md]

s4 --> s5{{👤 Human Approve}}

s5 -->|Approved| s6[AI Implements]

s6 --> s7([Ship])

end

VC ~~~ SDD

spec-kit and specification-driven development

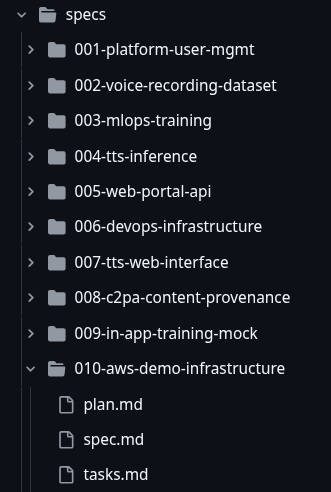

To establish this process, we adopted an emerging methodology called Specification-Driven Development (SDD). This approach shifted the source of truth in our project from the codebase to fully detailed specifications, plans, and other generated artifacts. Instead of using AI to directly implement a feature from scratch, we first authored a strict specification covering user stories, edge cases, and functional requirements. We then translated this into a structured plan split into granular tasks. After that, we let the AI write the code, effectively reducing its margin to hallucinate or produce unexpected behaviors.

We operationalized this with spec-kit, an open-source CLI toolkit from GitHub that provided a structured lifecycle and agent slash commands for every phase. The SDD lifecycle we followed consisted of five stages:

| Phase | Artifact | Purpose |

|---|---|---|

| 1 | constitution.md | Immutable project-wide principles: the laws every agent had to follow |

| 2 | spec.md | Feature contracts: system behavior, user stories, edge cases |

| 3 | plan.md | Architecture blueprint reviewed and approved before code generation |

| 4 | tasks.md | Granular, dependency-ordered work items traceable back to spec clauses |

| 5 | Implementation | AI executes tasks iteratively; humans review between phases |

constitution.md: Repository-level governance

The constitution defined the fundamental principles for the entire development process. In this document, we established ten strict rules covering best practices, Test-Driven Development (TDD), CI/CD, and local-to-cloud integration. For our team, the two most critical rules were:

### II. Test-First Development (NON-NEGOTIABLE)

- **Red-Green-Refactor**: Tests MUST be written first, verified to fail,

then implementation begins

- **Coverage Minimum**: 80% for all new code; critical paths require 100%

- **Test Types Required**: Unit, Integration, and Contract tests

- **Test Independence**: Each test MUST run in isolation; no shared state

### X. Complete Functional Implementation (NON-NEGOTIABLE)

- **No Placeholder Implementations**: Stubs are PROHIBITED in delivered features

- **No Chained Plans**: Plans requiring "Phase 2" for basic functionality

are INVALID; reduce scope instead

spec.md: Contracts, user stories, and edge cases before code

The spec.md (specification) defined the what, not the how. This was a product-oriented file where we detailed the User Stories, functional requirements, edge cases, and Given/When/Then acceptance scenarios. For instance, here is how we defined the C2PA content provenance:

# Feature Specification: C2PA Content Provenance for Generated Audio

**Feature ID**: 008-c2pa-content-provenance

## Functional Requirements

- **FR-001**: System MUST embed a C2PA manifest in every WAV file

produced by `generate_audio_task`, between generation and storage.

- **FR-006**: C2PA signing failure MUST NOT block audio delivery

(graceful degradation).

- **FR-009**: Signing MUST work with in-memory bytes — no temp files on

disk — compatible with both local and S3 storage backends.

## User Story 1 — C2PA Manifest Embedded in Generated Audio (P2)

As a Content Creator or Legal Compliance Officer, I need every generated

audio file to include a C2PA manifest so that the audio's origin and

synthetic nature are cryptographically verifiable.

**Acceptance Scenarios**:

1. **Given** `generate_audio_task` produces WAV bytes, **When** C2PA signing

runs, **Then** the stored WAV file contains a valid manifest embedded

as a RIFF chunk, without altering the audio samples.

2. **Given** C2PA signing fails, **When** the Celery task handles the

error, **Then** audio is still saved and served, and

`GeneratedAudio.c2pa_signed` is set to `False`. [...]

## Edge Cases

- **Signing fails**: Audio saved without manifest. User not blocked.

- **Certificate not configured**: Signing skipped silently. [...]

plan.md & tasks.md: Architecture reviewed before code runs

If the spec.md defined the what, the plan.md defined the how. This file was where we made the technical decisions, selected the technologies and libraries, and established the methodologies to follow.

Finally, the tasks.md detailed how to achieve the determined plan. It split the implementation process into different granular tasks, categorizing them by priority and identifying which ones could be executed in parallel:

## Stage 1: Environment Setup

- [x] T801 [US1] Add `c2pa-python>=0.28.0` to requirements

- [x] T805 [US1] Configure test certificates

- **Note**: Library rejects self-signed certs; use es256 test fixtures.

[...]

## Stage 2: Model & Migration

- [x] T809 [US1] Add `c2pa_signed` boolean field to `GeneratedAudio`

- [x] T810 [US1] Create and apply database migration [...]

Implementation: AI executes, humans review

With all these files reviewed and approved, the only remaining step was to implement the tasks.

We found that the tasks were usually split into phases. From our experience, we achieved better results when we instructed the AI agent to implement just one or two phases per prompt iteration, instead of asking it to implement everything at once. This iterative approach allowed us to test and review each phase immediately, helping us catch issues early before they could propagate through the whole implementation.

Day 2: Maintaining the specification chain

What happened when we had fully implemented a specification and needed to modify something?

Since the spec was our source of truth, we didn’t modify the codebase directly. Instead, we updated the specification to reflect the new requirements. Then we prompted the agent to update the corresponding plan and task files to align with this new approach. Once the modifications were fully reflected in the planning artifacts, we let the AI implement the updated tasks.

This approach ensured the codebase remained perfectly aligned with the real source of truth, effectively serving as living documentation.

For larger modifications that went beyond a simple requirement change, we adopted an iterative process: instead of updating the existing file, we created an entirely new specification. This method had the added benefit of preserving the complete architectural history of the project.

%%{init: {'theme': 'neutral'}}%%

flowchart TD

A([Requirement changes])

A --> B["Update spec.md\nnot source code"]

B --> C["Regenerate plan.md\n+ tasks.md"]

ctx(["constitution.md + spec history\nalways in context"]) -. informs .-> C

C --> D["Agent identifies\naffected modules"]

D --> E{{👤 Human review}}

E -->|Approved| F[Execute affected tasks]

F --> G([✅ Spec chain intact])

SDD in practice: What it solved for IA·Veu

Recently, we worked on IA·Veu, Fluendo’s generative AI voice platform in Catalan. For this project, we decided to use SDD with spec-kit to architect the complete application ecosystem. We built the Django REST Framework backend, the React frontend components, the Docker infrastructure, the Celery async pipelines, and the entire testing suite using this approach.

This was a major challenge because the project had a very large and extensive context. If we had tried to build it just by prompting the AI directly (vibe coding), we would have lost focus and context very quickly across the whole stack. Instead, the SDD approach was really helpful because it forced us to research and determine exactly what we needed to do in critical areas before writing any code.

For example, in a full-stack environment like this, changing a Django model meant we also needed to keep the React frontend and the Celery pipelines perfectly consistent. With SDD, we mapped out all these dependencies in the plan first. This way, we aligned all the layers before asking the AI to write a single line of code, entirely avoiding the typical trial and error of throwing isolated prompts and seeing what breaks.

| What we built with SDD | Result |

|---|---|

| Feature specifications written | 10 |

| Constitutional principles enforced | 10 |

| Backend tests — passing / total | 363 / 363 |

| Backend code coverage | 69.8% (target: 64%) |

| Frontend E2E tests — passing / total | 109 / 116 |

| Failure mode | How SDD solved it |

|---|---|

| Context amnesia | Constitutional principles traveled with every prompt, across backend, frontend, and infrastructure |

| Security regression | Compliance requirements encoded as spec FRs with explicit acceptance scenarios, not standup agreements |

| Architectural drift | Constitution Check gate kept all engineers and AI agents on the same reviewed blueprint |

| Untraceable decisions | Every task traces to a plan, to a spec, to the constitution — all versioned in Git |

From code typist to AI architect

IA·Veu was our first hands-on experience with Specification-Driven Development. We found it to be a powerful philosophy that completely changed the way we interacted with AI. In this workflow, our role fundamentally shifted: we stopped acting as mere code typists and successfully operated as software architects.

The tooling will inevitably evolve, but the core lesson we took away remains: the most effective thing you can give an AI coding agent is not a better prompt, it is a better specification.

If you’re interested in the project, exploring collaboration opportunities, or following its progress, get in touch.